Get a GRASP: A 5-Part Framework for Agent Risk

Before you ship an AI agent or agent system, get aligned on risk in 5 minutes. GRASP turns vague fear into concrete controls.

We didn't lose control of production. We empowered it.

TL;DR

GRASP is a shared language for assessing AI agent and agent system risk across technical and non‑technical stakeholders:

- Governance: can we observe and intervene?

- Reach: what can it/they touch (including implicit access)?

- Agency: what can it/they do without approval?

- Safeguards: what limits harm when it's/they're wrong?

- Potential Damage: what's the realistic worst case?

If you can't answer these quickly, you're not ready to ship autonomy.

Why GRASP exists

Most conversations about AI agent safety break down in one of two ways:

On one side, non-technical leaders feel a low-grade anxiety they can't quite articulate. They hear words like autonomous, agentic, and self-improving, and know something fundamental has changed. They lack a concrete way to reason about what's genuinely risky versus what's just hype. The result: blanket fear or blind trust.

On the other side, engineers are focused on making agents impressive: better prompts, more tools, faster feedback loops, protoduction. There's enormous upside and organisational pressure to be seen delivering AI. Risk becomes an afterthought. Slowing down to address risks means confronting vague anxiety from less AI-native stakeholders—frustrating and time-consuming.

Most agent risk has nothing to do with model intelligence. It comes from ordinary engineering decisions made without a shared language for risk: access granted too broadly, autonomy introduced too early, safeguards assumed rather than designed. This holds whether you're deploying a single agent or orchestrating multiple agents in a system.

GRASP gives everyone—from deeply technical engineers to non-technical decision-makers—a common framework to reason about what an AI agent or agent system can actually do, what constrains it when it's wrong, and whether the resulting risk is something the organisation is consciously accepting or deliberately mitigating.

The GRASP checklist

| Dimension | Core Question | What to Check | Example |

|---|---|---|---|

| G — Governance | Can we see what it's/they're doing and stop it/them? | Owner + escalation path · Action logs (inputs, reasoning, outputs) · Monitoring/alerting · Pre-prod evals · Kill switch · Inter-agent communication visibility | Tool calls logged with trace ID; on-call can disable in <60s; multi-agent orchestrator can halt all agents |

| R — Reach | What can it/they touch (explicit + implicit)? | Credentials (scopes, TTLs) · Network (egress, prod/stg/dev) · Tooling (CLI, kubectl, terraform) · Data (PII, secrets, code) · Cross-agent access patterns | CI agent reads repo, writes branches — no Terraform state, secrets, or internal network |

| A — Agency | How autonomous is it/are they? | Self-trigger vs request-only · Compose new actions vs predefined · Approval requirements · Rate limits / timeouts · Agent-to-agent delegation | Can open PRs; merges and deploys require human approval |

| S — Safeguards | What limits damage when it/they act(s) unsupervised? | Canary/staged execution · Auto-rollback on failure · Compensation for irreversible actions · Idempotent operations · Circuit breakers for cascading failures | Deploys canaried per-region, auto-reverted on health degradation |

| P — Potential Damage | What's the credible worst case? | Blast radius · Irreversibility · Detection latency · Recovery path · Exposure (financial, legal, reputational) · Cascading failure scenarios | Bad config → 15-min outage in non-critical service, auto-reverted. No PII. Trivial cost. |

Rule: If an agent can run arbitrary CLI commands, assume it can touch anything the host can. In multi-agent systems, assume agents can access anything any agent in the system can access.

GRASP must be intentionally pessimistic: it must assume agents will be wrong, humans will be slow, and systems will fail in the worst possible order.

How to use GRASP in practice

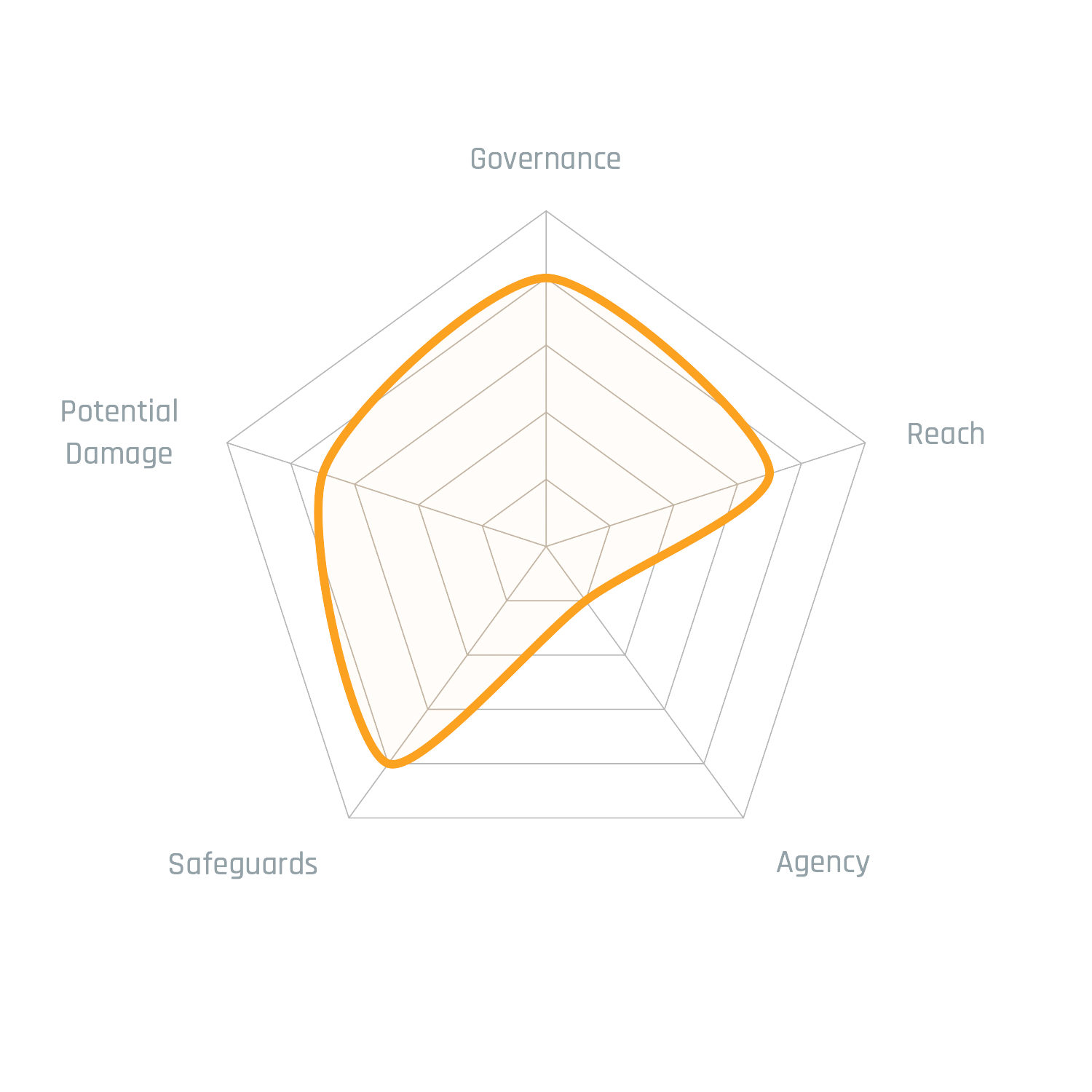

GRASP is best used as a risk profile, not a pass/fail checklist.

Each dimension represents how much risk the organisation is intentionally accepting in that area. Scores closer to the centre reflect more conservative designs; scores further out indicate deliberate trade-offs for speed, leverage, or scale. Risk isn't inherently bad. Zero risk means zero reward. GRASP's goal isn't to minimise every dimension—it's to make risk explicit, discussable, and owned.

The shape of the diagram matters more than the absolute values. Asymmetries highlight tension:

- High agency without safeguards

- Broad reach without strong governance

- Large potential damage without clear containment

GRASP assessments are temporal. They describe an agent or agent system at a moment in time. As agents gain new permissions, integrations, or responsibilities—or as multi-agent systems add new agents or change orchestration patterns—their GRASP profile must be revisited.

Example — Coding Agents

The coding agent example shows how dramatically risk can change without modifying the agent itself. Run in a sandboxed CI or VM environment, the agent's reach and agency are tightly bounded. The same agent running on a developer's primary machine inherits the full implicit reach of that environment. GRASP surfaces this shift explicitly.

Variant A: Running on the User's Primary Host

Note: Assumed using a tool like Cursor, non Ralphing/YOLO, not routing via AI Proxy like LiteLLM.

| Dimension | Description |

|---|---|

| Governance | Owner exists, little to no action logs, no monitoring or alerting inbuilt, pilot use before deployment, no kill switch |

| Reach | Full local filesystem, CLI, whatever stored/HCV accessible creds, CLI accessible tools |

| Agency | Still relies on a human to trigger the Agent manually, human approval required on all actions/tool calls |

| Safeguards | Code changes version controlled, databases backed up every 15 minutes, version control backed up, bricked machine can be rebuilt/redeployed |

| Potential Damage | Human accidentally approves an action resulting in corruption or changes to data in an accessible critical database that goes unnoticed for a period of time |

Variant B: Sandboxed

Note: Claude Code on an ephemeral VM, network restricted. Runs in Yolo mode/Ralphing.

| Dimension | Description |

|---|---|

| Governance | Similar to above no logging, owner exists, pilot use, kill switch is remote shutdown of VMs |

| Reach | Exceptionally restricted to the local file system and git commit actions |

| Agency | Extremely high, running autonomously with no dedicated HITL |

| Safeguards | VM can be rebuilt, commits can be reverted |

| Potential Damage | High inference costs |

The Point

The goal is to rapidly reach a risk acceptance position, not to remove all risk. Zero risk means zero reward. Accepting the wrong risk can be crippling.

Without a shared taxonomy for discussing agent and agent system risk, conversations devolve into vague anxiety that throttles progress unnecessarily. GRASP gives teams a common language to align on what risks they're intentionally accepting, which ones need mitigation, and which ones are deal-breakers—so you can move fast on the right risks and slow down only when it matters.

Whether you're deploying a single agent or orchestrating a multi-agent system, the same framework applies: make risk visible, discussable, and owned.

References

- AURA: An Agent Autonomy Risk Assessment Framework — autonomy-driven risk

- AI Agent Governance: A Field Guide — governance for continuously acting agents

- Beyond Single-Agent Safety: A Taxonomy of Risks in LLM-to-LLM Interactions — post-decision containment and systemic safety